NXP NPUs: Understanding the Shift

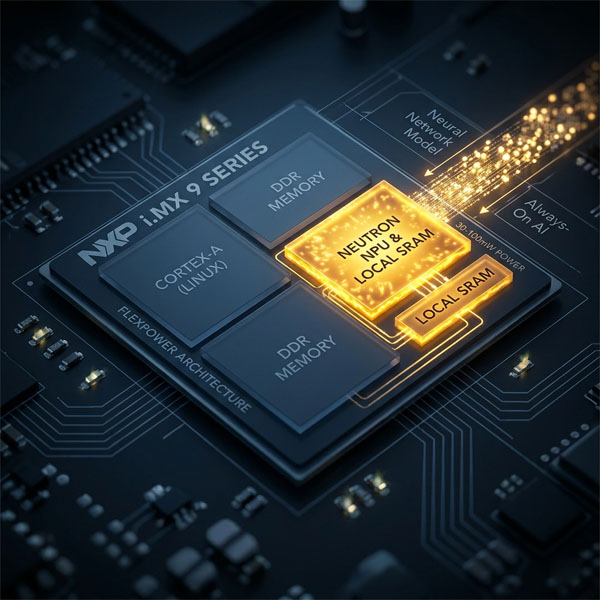

NXP recently introduced their latest i.MX937 Application Processor with on-chip Neural Processing Unit (NPU).

It’s the opportunity to sit back and reflect on NXP’s NPU journey since their visionary i.MX8M Plus.

I have a soft spot for NXP processors with NPUs.

Not because they are the most powerful on paper, but because they operate where embedded systems actually live: under power constraints, with limited memory bandwidth, and with very little tolerance for architectural mistakes.

NXP i.MX application processors are complex machines. They combine CPUs, GPUs, ISPs, NPUs, real-time cores, and a wide range of peripherals. This article does not attempt to cover all of that.

We will focus on one aspect only: NPU and deep learning execution.

And one more thing: this article is not about a product launch. It is about a shift in how embedded AI/ML systems are built.

To understand that shift, we will look at a few key devices:

- i.MX8M Plus

- i.MX93

- i.MX95

- i.MX937

At first glance, they all “have an NPU”.

In practice, they represent different stages of the same transition.

For the Impatient

If you only have 30 seconds, this is what matters.

Essential metrics

| SoC | CPU | NPU | AI Perf | Low-power AI (NPU power) |

|---|---|---|---|---|

| i.MX8M Plus | Cortex-A53 | Vivante (VeriSilicon) | ~2.3 TOPS | No (system-level only) |

| i.MX93 | A55 + M33 | Arm Ethos-U65 | ~0.5 TOPS | ~30–60 mW |

| i.MX95 | A55 (higher-end) | eIQ Neutron | ~2 eTOPS | ~50–100 mW* |

| i.MX937 | A55 + M33 + M7 | eIQ Neutron | ~2 eTOPS | ~40–80 mW* |

* Estimated ranges depending on model and memory usage.

What actually differentiates them

| SoC | Model compilation | Memory behavior | NPU origin | Key takeaway |

|---|---|---|---|---|

| i.MX8M Plus | Runtime (dynamic) | DDR-bound | Third-party | Easy to start, hard to optimize |

| i.MX93 | Offline (Vela) | Local SRAM-oriented | ARM | Lower TOPS, low-power for always-on AI |

| i.MX95 | Offline (eIQ) | SRAM + planned transfers | NXP | Same TOPS as i.MX8, much higher efficiency |

| i.MX937 | Offline (eIQ) | SRAM + planned transfers | NXP | Combines TOPS and low-power in a balanced platform |

On i.MX93 and i.MX9 devices, AI is no longer a “high-power feature”. It becomes something you can afford to run continuously.

Everything else in this article explains why these differences exist.

From acceleration to architecture

Let’s take a step back and look at how NXP got here.

The i.MX8M Plus: the mother ship

The i.MX8M Plus was the entry point to embedded ML for many teams, including me. It consumed real power, but delivered unprecedented TOPS for this price range.

It introduced a simple and appealing model: a .tflite file is both your development artifact and your runtime entry point. You deploy it, enable the delegate, and the system dynamically decides what runs on the NPU.

That simplicity is real, but it comes with a structural limitation that is easy to miss:

Most performance issues on i.MX8 are not compute issues. They are memory issues.

The NPU sits in a shared DDR architecture. Data constantly moves between compute units, and even well-supported models can suffer from excessive memory traffic.

The system feels flexible, but the trade-off is unpredictability.

eIQ on i.MX8: useful, but optional

In that context, eIQ never really felt essential.

It was a convenient aggregation of existing tools, wrapped together with demos and integration layers. The underlying technologies were already accessible elsewhere in their latest versions.

You could use it, or bypass it entirely. Many teams did.

The i.MX93: when power becomes the constraint

With the i.MX93, the priorities change.

At first glance, the NPU looks significantly less capable than the one in the i.MX8M Plus. With roughly 0.5 TOPS versus ~2.3 TOPS, it is easy to conclude that this is a step backward. It is not.

It is a redefinition in what “performance” actually means.

The introduction of an Arm Ethos-U NPU, controlled by a Cortex-M33, enables a different execution model: inference without waking the main system.

A simple example illustrates this well:

A camera monitors a scene continuously. A lightweight model runs on the NPU to detect motion or presence. The Cortex-A55 cluster, running Linux, remains asleep. Only when an event is detected does the system wake up and perform further processing.

In that context, raw TOPS is no longer the primary metric. What matters is how much work you can do without ever leaving a low-power state.

This is not just an optimization, it is a shift in system design. Instead of accelerating everything, you decide when computation is worth doing.

The i.MX95: same compute, different efficiency

The i.MX95 does not dramatically increase TOPS compared to the i.MX8M Plus.

At first glance, this can be surprising. One might expect a new generation to bring a clear jump in raw compute. Instead, the numbers remain in the same range.

This is not a limitation. It is a deliberate shift in strategy.

With the i.MX95, NXP moves away from third-party NPU IP and introduces its own architecture: eIQ Neutron. This is a major change.

Until the i.MX8M Plus, NXP relied on external IP (VeriSilicon Vivante). With the i.MX93, they adopted ARM’s Ethos-U. With the i.MX95, they take full control of the NPU architecture.

This is not just about performance. It is about ownership. Owning the NPU means:

- control over the execution model

- control over the memory architecture

- control over the toolchain

And this is where the real gains come from.

The i.MX95 is not faster because it computes more. It is faster because it computes more efficiently, by minimizing data movement and planning execution ahead of time. NXP’s claim of up to 3× speedup on some workloads reflects exactly that.

The i.MX95 does not fix compute. It fixes data movement. And the move to in-house IP is what makes that possible.

The i.MX937: making the transition accessible

The i.MX937 brings the i.MX9 architecture into a much more accessible and balanced space.

At its core, it inherits what made the i.MX93 so compelling: a FlexPower architecture capable of running inference in low-power states, with the main application cores powered down.

That capability is preserved. The system can still observe, detect, and decide without waking Linux.

But this time, the story does not stop at efficiency. With roughly 2 eTOPS, the i.MX937 delivers a massive step up in compute compared to the i.MX93, while keeping the same low-power philosophy.

This changes the nature of what can be done in standby: not just simple detection, but more complex models with richer decision logic before wake-up

In practice, this is the first time in the lineup where you get both:

- meaningful ML compute capability

- and a sober power architecture

The i.MX937 is a true crossover. It sits between two worlds:

- the minimalist, ultra-efficient design of the i.MX93

- the higher processing capability of the i.MX95

And it does so without forcing you to give up either.

This is what makes it particularly interesting for real products: systems that need to be always aware, but not always fully awake.

The real break: how models are executed

The most important change is not visible in the hardware. It lies in the workflow.

Conceptual shift: memory and execution

i.MX8M Plus

i.MX9 (93 / 937 / 95)

Model (.tflite)

↓

Runtime delegate

↓

CPU ↔ DDR ↔ NPU ↔ DDR ↔ CPU

Model (.tflite)

↓

Offline compilation (Vela / eIQ)

↓

Compiled model

↓

NPU execution with planned SRAM usage

Dynamic execution, heavy DDR traffic, unpredictable performance.

Planned execution, controlled data movement, predictable behavior.

On i.MX9 devices, the .tflite file remains the entry point, but it is no longer a generic model. After compilation with Vela or eIQ, it becomes a container embedding precompiled execution segments for the NPU, typically wrapped as custom operators inside the graph. The runtime still loads a .tflite, but it no longer interprets it dynamically. Instead, it executes a model whose behavior has already been mapped to the hardware.

In that sense, the .tflite is no longer just a model. It is a deployment artifact.

Execution is no longer decided at runtime. It is defined at build time.

This shift brings predictability, but also requires a different mindset.

eIQ: from convenience to necessity

Many developers intuitively felt this other shift:

On i.MX8, eIQ was optional.

On i.MX9, it becomes necessary.

Not because of marketing, but because the hardware depends on it. The Neutron NPU requires a dedicated compilation step. Without it, the accelerator cannot be used effectively.

NXP is no longer just providing silicon. It is delivering a hardware + software system.

Final thoughts

Looking at these platforms through the lens of TOPS alone is misleading.

What matters is:

- how data moves

- how memory is used

- how execution is planned

Two systems with similar compute capability can behave very differently depending on these factors.

The evolution from i.MX8M Plus to the i.MX9 family is not just a product evolution. It is a shift in how embedded AI systems are designed.

From flexible but opaque execution

→ to structured and predictable behavior.

From runtime adaptation

→ to compile-time control.

The i.MX937 sits right in the middle of that transition, making it accessible to a broader range of systems. And if this is the direction NXP is taking, then the most interesting part may still be ahead.

Because once you start designing the NPU, the memory architecture, and the toolchain as a single system, the room for improvement is no longer incremental. It becomes structural.

I, for one, can’t wait to see what NXP has in their bags next.

Enjoyed this article?

Embedded Notes is an occasional, curated selection of similar content, delivered to you by email. No strings attached, no marketing noise.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Ut elit tellus, luctus nec ullamcorper mattis, pulvinar dapibus leo.